Broadleaf Services Teams with Echelon Services and SPAARK to Deliver Strategic Data Analytics Support Services to a DoD Agency

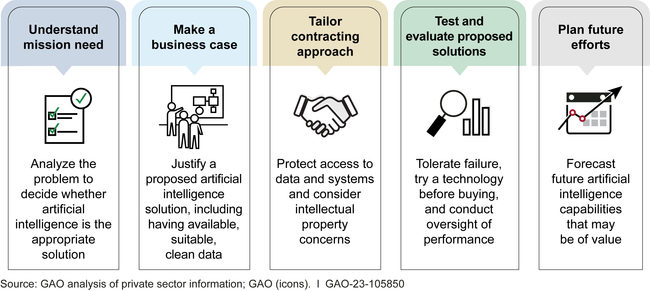

Description of Work Performed: Broadleaf Services supports a broad spectrum of analytical and data visualization, data exploitation, management consulting, and support services necessary for implementing Artificial Intelligence/ Machine Learning (AI/ML) applications in the Contract Administration field. Specifically, Broadleaf Services applies expertise in professional consulting services to assist with the full range of business intelligence program/project management services necessary to implement a data strategy for DCMA, which is expected to evolve over the contract’s period of performance. These capabilities include a wide range of functional data management disciplines, including data exploitation and analysis, data visualization and dashboard strategy, application program and project management, and data management.

Broadleaf Services provides the COR with Monthly and Quarterly Progress Reports, covering all work completed during the reporting period and work planned for the subsequent reporting period. These reports also identify any problems that arose and give a description of how the problems were resolved. For any unresolved issues, Broadleaf Services provides an explanation including a plan and timeframe for resolution. We monitor progression against the Performance Plan and report any deviations to prevent the need for escalation.

Background of DCMA Analytic Requirement: DCMA Chief Data Officer (CDO) requires additional resources and expertise to efficiently and effectively execute its responsibility for managing the Agency’s data resources and ensuring that DCMA complies with Federal law, Department of Defense (DOD) Directives and oversight, and internal policy and requirements. Legal and statutory requirements include those specified in the Paperwork Reduction Act, Privacy Act, Federal Records Act, the Freedom of Information Act (FOIA), the Clinger-Cohen Act, and the Data Quality Act. Federal oversight requirements include policy and guidance documents issued by the Office of Management and Budget (OMB), the Government Accountability Office (GAO), and the Executive Office of the President. Internal requirements include those issued by the agency’s Inspector General, the agency’s Director, and the Chief Information Office (CIO).

As a DOD Combat Support Agency, DCMA ensures the integrity of the contracting process and provides a broad range of contract-procurement management and administrative services to ensure a valued product is being delivered promptly and ready for use by America’s Warfighters. DCMA works directly with Defense suppliers to ensure that DOD, Federal, and allied Government supplies and services are delivered on time, at projected cost, and meet all performance requirements. With headquarters in Fort Gregg-Adams, Virginia, DCMA employs approximately 10,000 civilian and military professionals with over 800 distinct and supported employee duty stations worldwide. DCMA’s Chief Data and Analytics Office includes approximately 25 Government and contractor personnel around the world.

Broadleaf Services provides professional services in the following areas:

- Advanced Analytics

- Data Strategy Development and Implementation

- Data Science

- Performance Management Integration

- Data Analytics

- Dashboard and Data Visualization Support

- Data Visualization Sub-tasks

- Application Development Support

- Application Development Support Sub-Tasks

- Artificial Intelligence (AI) and Machine Learning (ML) Planning and Implementation

- Enterprise Architecture (Data Architecture and Data Management)

- Research and Analysis of the Current State

- Conceptual Architecture Diagrams and Artifacts